How Blue Zoo are approaching AI & Real-time animation in 2023

Our co-founder Tom Box was invited to speak at Annecy Animation Film Festival to discuss and share our studio's approach to the fast-moving topics of real-time and AI in animation, especially as a B Corp.

The short intro-presentation demonstrated our approach to technology; we started Blue Zoo as a digital animation studio in the year 2000 - when Maya was first available on an affordable home PC, this coincided with the birth of digital TV in the UK. The combination of these two events meant animation was in huge demand, and we could afford to start a studio without a huge investment. So our studio and all the jobs we've created would only exist with us embracing technological advancements from our very first day.

Over the preceding 23 years, we've continued to embrace and discover new advances, as complacency is the enemy of any business. So we have experimented with optimising our productions to ensure you see every penny of the budget on screen.

Real-time

In 2015 we did this by experimenting with GPU rendering, creating a short film called "More Stuff" that utilised GPU rendering to make our renders up to 10x as quick. But we did not do this to maximise profit; instead, we used saving to maximise quality, propelling our studio to have a worldwide reputation for outstanding quality; when it comes to business, we play the long game, not the short game. This led to us becoming the first big studio in the world to switch entirely to GPU rendering long-form animation in 2016.

Not long after this, we experimented with real-time rendering using game engine technology, including Epic's Unreal Engine. We explored how this can help us experiment with new styles and production efficiencies, enabling further creative fidelity. Again through our short films programme, we created a film called "Ada", with a hand-drawn pencil style. We ripped up our standard production pipeline and rebuilt it, which enabled highly optimised assets to render in real-time. This meant Dane Winn, the director, could craft, iterate and experiment with the storytelling until the very end of production, maximising the audience satisfaction.

But this optimisation comes at a cost, and it doesn't always pay off, as it requires working in a different method, throttling more advanced technical capabilities such as squashy, cartoony rigs and rendering technology such as realistic light physics. Therefore we have arrived at using technology like Unreal Engine when the value-add outweighs the limitations. This has been when we work with IPs already in Unreal or projects needing interactive or instant renders.

Another use case has appeared in the last few years: YouTube. As more younger audiences move to YouTube, so must we. But a problem arises when we can't keep up with the pace of delivery that YouTube requires. We could solve this by creating cheap keyframed animations as quickly as possible, lowering labour costs and reducing quality. But our studio's goal is to be utterly proud of our creative work and look after our artist's well-being, so squeezing our artists to the maximum would shatter this goal.

Therefore we've developed a new technology called "MoPo", where a VR animator can digitally puppeteer multiple characters in a virtual production environment without the physical limits of motion capture technology. We've embraced the real-time technology and VR controllers to allow for immersive content production, aided with an Epic Mega Grant to help fund the technical development. This allows us to "animate" up to a minute of character content daily at a very high render quality to keep up with YouTube demands while enabling our artists to enjoy the process thoroughly. You can check out our first IP made using MoPo - "Silly Duck" on our Little Zoo YouTube Channel.

Artificial Intelligence

Another technical frontier is AI. As with real-time, we want to experiment with every new technology to see how it can help our studio's mission. But as B Corp, we must do this while carefully considering all the ethics, sustainability and governance around it, ensuring it results in a net positive for everyone.

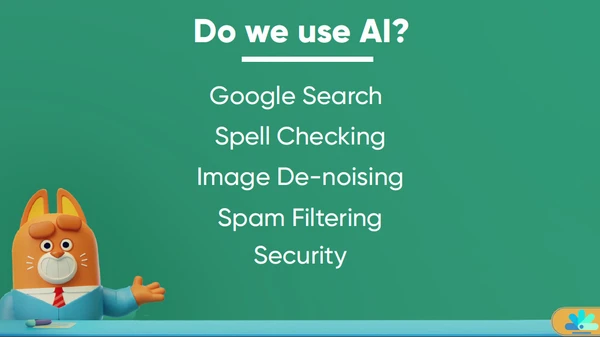

We have used AI in our studio for a long time, but this has mainly been discriminative AI - using AI to categorise and recommend entities, such as using Google, spam detection or spell check, which no one raised an eyebrow at.

But this all changed with the advent of generative AI (a fancy word for predictive AI), which was turbo-charged with the invention of the Generative Pre-Trained Transformer (GPT) by Google in 2017. This gave the AI model context, which resulted in the birth of ChatGPT by OpenAI, which also discovered it could make images too, which led to tools like MidJourney.

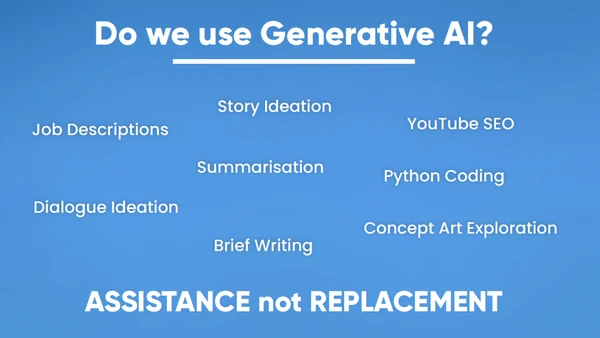

So how do we approach that? Well, we are currently utilising Generative AI, which helps "automate the boring stuff", such as helping write job specs, and also where it helps explore and communicate ideas, such as building mood boards instead of using Google Images.

Just last month, we needed to write a job-role description for a "VR puppeteer animator" - a job role that hadn't previously existed. So we copied a few bullet points from job descriptions for a 3D animator, a puppeteer and a VR artist and asked ChatGPT to write a new, combined job spec, and it did a stellar job! In this instance, AI saved our team hours of work.

None of these uses are swapping hiring humans for hiring computers; these tools augment our artist's creativity, not replace it. With this technology more than ever before, we need to cautiously experiment with it whilst considering all of the impacts, such as privacy, environmental footprint, copyright, and societal. Therefore we are issuing guidelines in our studio on how we do this and encouraging artists to experiment.

Ultimately, AI won't replace people doing jobs - other humans utilising AI tools will. But we want to ensure we are using AI in the exact same way as we have demonstrated the benefits of GPU/real-time speed gains, where we have maximised the on-screen quality of our work through efficient use of artists’ creativity. Using AI as a short-term fix to minimise staffing costs is a race to the bottom; we want to use it to maximise quality for long-term profit gains to advance our studio’s mission. This is balancing profit with purpose, which is what B Corp is all about.

Q&A Section

Which jobs are at risk of disappearing, and which are emerging?

Computers excel at discreet, clearly explained tasks, and Generative AI is no different. Therefore asking a Generative AI to re-write a sentence correctly is a lot easier to do well than asking it to write a "good" script. Therefore the jobs that are more at risk are those doing more straightforward, well-defined tasks. The problem is that these jobs are entry-level assistant jobs.

Does this mean it will be harder for people to enter careers when AI reduces the number of entry-level jobs? This barrier was also the concern of VFX studios in the UK when studios moved their roto teams to cheaper labour countries. Because the artists' career path into VFX studios predominantly started in roto, there was a valid concern that removing roto teams would block entry into the industry. What happened? Those graduates just started at the next level of the career ladder, resulting in the gain of less time to get to their desired career role.

As to which jobs will emerge, the human differentiator will drive this. That differentiator is the heart, the ability to understand what another human is saying and interpret that through your own experiences and craft so it resonates and pleases other humans. This heart is what makes stand-out content that is powerful and gets eyeballs. And eyeballs will always be where the money is, which drives corporate decision-making. So any jobs created will add this layer of heart on top of what the AI model is capable of, which is ultimately just statistical analysis and prediction.

Do you think AI should become an essential tool for creators in their daily work?

AI should not become an essential tool; it has already become an essential tool. If you use Google, you already use AI as an essential tool. People at Blue Zoo who write frequently use the writing assistance tool Grammarly (we used it to write this post!), which uses AI. So the AI functionality is seamlessly baked-in, which will continue to happen with generative AI.

If people take a divisive "AI = bad", it could harm careers, just as if a 2D animator using paper and pencil refused to use TV Paint / Harmony / CelAction, as these tools have removed traditional jobs and created many more new jobs. There are good uses of AI and bad; to brand all of it the same is reductive.

Many professionals believe AI can be a major risk for the industry’s future, raising ethical issues. How do you reconcile innovation and the protection of creators?

This question is the crux of any generative AI discussion, as no technical advancement before has ingested the hard labour of others in the way models have sucked up vast quantities of copyrighted text and imagery.

Some companies create the best work, utilising human brilliance to create content that captures the audience's mind. Some companies want to make content the quickest and cheapest possible, without care for quality or ethics. These two types of companies have always existed and will continue. Those that are great will continue to be employed by the former.

Generative AI content will be tough to copyright with more laws saying so based on the training data. Therefore companies will avoid using it in this way unless it has been "through" a human, as in using AI for creative ideation, just as writers may use a thesaurus or artists use Pinterest.

Therefore there is a potential future where humans with incredible talent (visual artists or voice artist) will license their talent to bespoke generative AI models, just as royalty-free libraries work today - enabling an additional source of income in parallel to their everyday work. Their legal model offers a less customisable service than their human counterpart (just like a stock image) but increases rather than decreases the artist's future earnings.

Parting thoughts

The camera phone has created a whole new generation of amazing content creators, which two decades ago would have never had the opportunity; generative AI will do the same and is just a tool, as a phone is.

This bar-lowering will do two things, uniquely empower new content creators and propel them into great things, but it will also result in a deluge of awful content. This content will have no heart, just a byproduct of people hoping to make a quick bit of low-effort money. So the internet will be flooded with low-quality AI-generated content, but this will make the best work rise to the top with individuals and studios' combined talents being celebrated and hired. We've already seen this with people posting beautiful MidJourney artwork, with the crowd going wild.

At Blue Zoo, we pride ourselves on making content that stands out positively. An audience always enjoys a show more where they can tell the creators have had fun making it and put their heart into creating it. A statistical model cannot do this, and it shows every time.

Ultimately we need fewer click-bait headlines and more open, honest discussions covering all opinions, so we welcome any further questions, thoughts and comments!